📝 Research :https://ojitha.blogspot.com.au for my lengthy articles.

LLM Wiki

April 8, 2026

Andrej Karpathy Obsidian method refers to his publicly shared system for using LLMs to build and maintain personal knowledge bases as interlinked markdown wikis, all viewed and navigated through Obsidian. The system, which he calls LLM Wiki or LLM Knowledge Bases.

Running AMD ROCm AI Workloads locally

March 7, 2026

This guide demonstrates how to run AMD ROCm AI workloads on the MINISFORUM AI X1 Pro-470 mini PC powered by the AMD Ryzen AI 9 HX 470 (12-core Zen5, up to 5.2GHz), featuring the integrated Radeon 890M (gfx1150) GPU and an 86 TOPS NPU. Running Ubuntu with the OEM kernel, the setup includes installing ROCm 7.2, verifying HSA agents with rocminfo, and deploying PyTorch in Docker containers. It also details configuring MIGraphX and ONNX Runtime with the MIGraphX Execution Provider via Docker Compose, enabling high-performance on-device ML inference — fully local, no discrete GPU required..

Kubernetes Introduction

January 3, 2026

This post provides a practical introduction to Kubernetes, focusing on essential networking concepts and hands-on debugging techniques within a Linux-based cluster. It guides readers through inspecting node roles, understanding Container Network Interfaces (CNIs) such as Flannel, and analysing overlay networks using tools such as

ip route and crictl. The tutorial examines kube-proxy modes, service discovery via CoreDNS, and the deployment of multi-tier applications using Redis and Nginx. Furthermore, it demonstrates how to expose services using NodePort, scale deployments for high availability, and utilise `kubectl exec` and `cp` for effective pod interaction and troubleshooting.ICIJ Fraud Analysis

December 6, 2025

Uncover the hidden wealth of nations by analysing the ICIJ Offshore Leaks Database using Apache Spark and Scala. This technical guide demonstrates how to process more than 810,000 offshore entities identified in the Panama and Pandora Papers to detect financial fraud. We walk through the process of defining Schema Case Classes for nodes and relationships, loading CSV data into Spark Datasets, and executing complex multi-hop graph joins. Learn to reconstruct fragmented data into complete entity profiles, map beneficial ownership networks, and identify suspicious shell companies sharing registered addresses. Master graph database analysis techniques to expose global corruption and money laundering structures effectively.

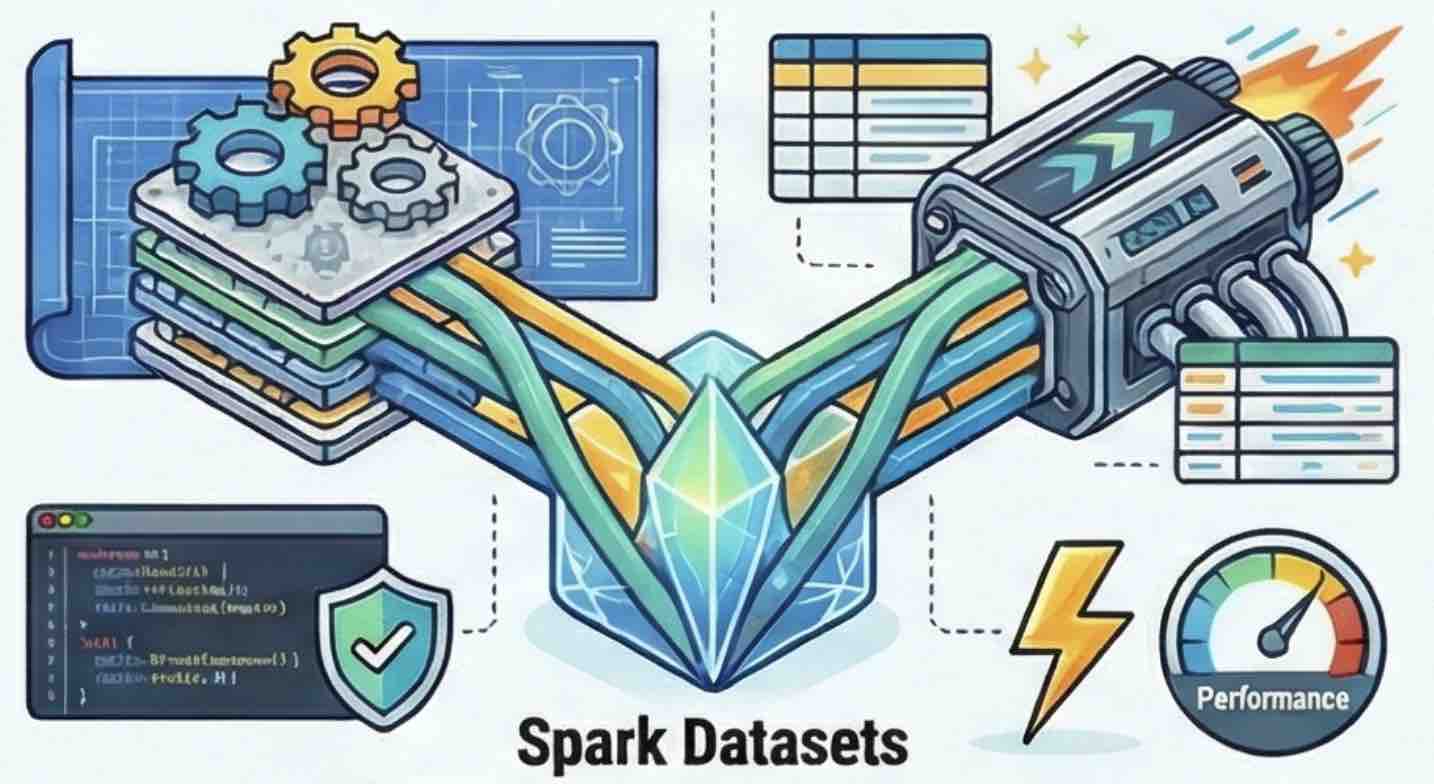

Spark Dataset APIs

November 7, 2025

Comprehensive technical guide to the Apache Spark Dataset API, defining it as a distributed collection that provides type safety while benefiting from the performance optimisations of the Catalyst Optimiser. It explains key internal mechanisms, such as Encoders, which manage the serialisation between domain-specific JVM objects and Spark’s internal binary format, using the MovieLens dataset to illustrate conceptual data entities. The text analyses fundamental transformations, including the functional narrow transformations like

map and flatMap, and contrasts the standard, untyped join with the type-safe joinWith operation. Furthermore, the guide highlights significant performance considerations for wide transformations, noting that groupByKey requires a full data shuffle and lacks the map-side combine optimisation available in the standard DataFrame groupBy. Finally, the documentation scrutinises a physical query plan to detail how Adaptive Query Execution (AQE) dynamically optimises resource usage by adjusting partition sizes based on runtime statistics.